Edge AI Vision-Guided Robotic Arm Control | Real-Time Object Detection with Jetson Orin Nano

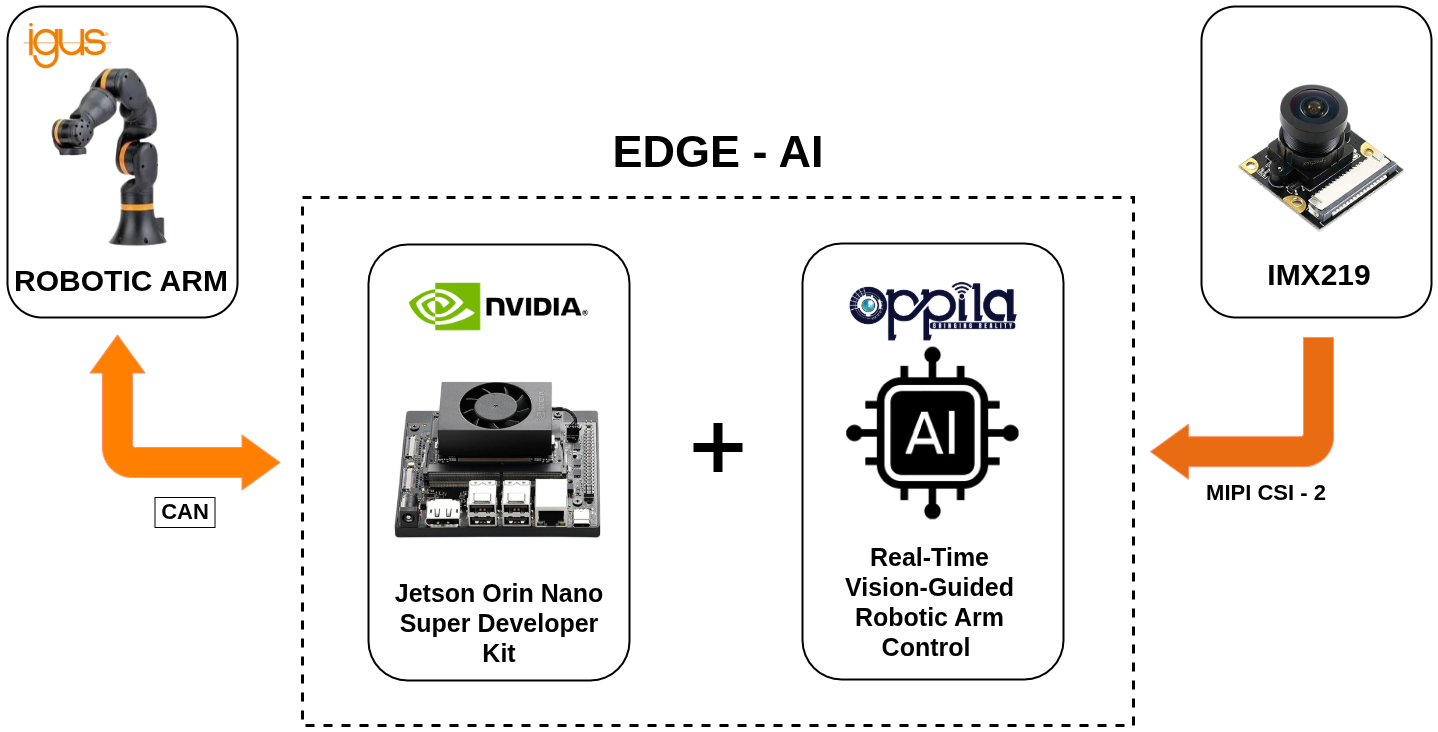

System Overview

This stage introduces real-time object detection with motion-interrupt control.

The system integrates:

- Jetson Orin Nano Super Developer Kit

- Sony IMX219 (MIPI CSI-2 camera)

In this implementation, the robotic arm operates in a continuous motion state. When an object is detected within the camera frame and validated above a confidence threshold, the system immediately sends a stop command to the robotic arm.

This creates a vision-triggered motion interruption.

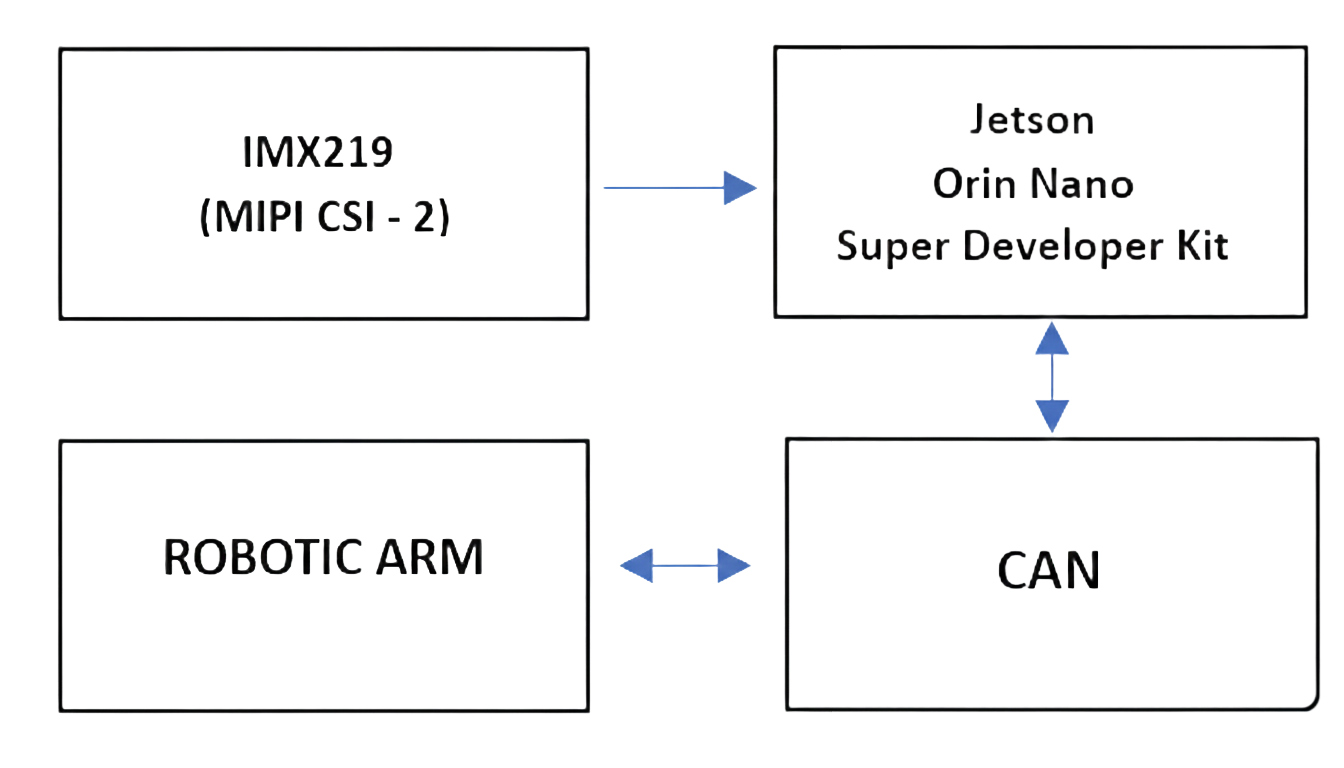

Hardware Architecture

- Video input through MIPI CSI-2

- AI inference executed on Jetson GPU

- Motion control via CAN protocol

- Live visualization on monitor

AI Processing & Motion Control Flow

- Robotic arm operates in continuous motion mode

- IMX219 camera captures live video stream

- AI model performs real-time object detection

- Detection confidence threshold is evaluated

- If object detected → Stop command generated

- CAN frame transmitted to robotic arm

- Robotic arm immediately halts

If no object is detected, the arm continues its predefined motion cycle.

Key Features

- Real-time GPU-accelerated object detection

- Motion interruption upon detection

- Configurable confidence threshold

- Fully edge-based processing (no cloud)

- Deterministic CAN communication

- Low-latency response system

Block Diagram

An architectural overview detailing the integration of MIPI CSI-2 video input, Jetson edge AI inference, and CAN bus motion control.

YouTube Video

Watch the Jetson Orin Nano perform real-time object detection to intelligently interrupt and control the robotic arm's motion.

Conclusion

Integrating vision-guided intelligence at the edge transforms robotic control from blind, repetitive motion into dynamic, reactive automation. The Jetson Orin Nano, paired with MIPI CSI-2 cameras and CAN bus communication, provides a completely localized, low-latency framework for object detection and instantaneous motion interruption.

This architecture not only enhances safety and precision on the factory floor but also demonstrates that true intelligent automation can be achieved entirely at the edge—without the limitations of cloud dependency or generic OEM software.

FAQ

Vision-guided robotic arm control integrates AI-powered cameras, such as the Sony IMX219 via MIPI CSI-2, directly onto edge devices like the Jetson Orin Nano. This allows the arm to dynamically 'see' objects, enabling real-time motion interruption without relying on cloud computation.

The NVIDIA Jetson Orin Nano provides high-performance, GPU-accelerated AI inference tailored for edge applications. For smart automation deployments in industrial hubs across India, it delivers low-latency object detection necessary for real-time safety and precision without the latency of cloud-dependent architectures.

The MIPI CSI-2 interface allows for high-bandwidth, direct communication between camera sensors (like the Sony IMX219) and processors. This results in ultra-low latency, uncompressed video streaming crucial for instantaneous AI object detection and immediate robotic reaction.

The CAN Bus (Controller Area Network) protocol acts as the robust nervous system of the robotic controller. It ensures deterministic, noise-resistant transmission of motion profiles and emergency stop commands directly to the servo motors, making it highly reliable for industrial and manufacturing environments.

While PLCs are still standard for basic logic, Edge AI platforms like the Jetson Orin Nano are increasingly replacing or augmenting PLCs for tasks requiring complex, vision-based decision-making—transforming rigid robotic cells into flexible, intelligent automation systems.

By continuously processing the visual feed at the edge, the system can instantly identify unexpected objects or human presence within the operational envelope. When the confidence threshold is met, it triggers a sub-millisecond CAN stop command, significantly elevating safety protocols in fast-paced warehouses.

A custom GUI, like the ones developed by Oppila for the Jetson platform, allows manufacturing plants to strip away bloated OEM software, ensuring operators only see mission-critical data. This bespoke approach allows for seamless integration of specialized AI tools specific to their exact production line.

Yes, the Sony IMX219 is an excellent sensor for edge integration. Paired directly with a Jetson Orin Nano via the CSI-2 interface, it provides the necessary resolution and frame rate to perform accurate, real-time object detection models in varying industrial lighting.

No. The defining feature of Edge AI is that all machine learning inference and motion calculations occur locally on the Jetson Orin Nano processor. This guarantees absolute data security and zero downtime related to network connectivity issues.

Because both platforms utilize Linux environments, transitioning fundamental control scripts (like CAN bus interfaces) is straightforward. Moving to the Jetson unlocks the GPU, allowing developers to seamlessly append complex computer vision workflows onto their existing control foundations.

Absolutely. By training the edge-deployed AI model on defect datasets, the robotic arm can continuously scan products on an assembly line. If a defect is detected via the IMX219 camera, the system can immediately halt the line or reroute the defective unit.

By combining the direct CSI-2 camera feed, localized GPU inference on the Jetson, and deterministic CAN bus commands, the total round-trip latency—from sensing an object to the physical halting of the servos—can be reduced to milliseconds.

Yes, once a customized Edge AI inference model and control architecture are refined on a Jetson Orin Nano, the exact software image and hardware topology can be rapidly replicated and deployed across numerous robotic cells and factory locations globally.

CAN Bus was specifically engineered for harsh industrial environments to prevent electromagnetic interference (EMI). Unlike standard USB or Ethernet, CAN guarantees message delivery timelines (determinism), which is non-negotiable for synchronized, multi-axis motion.

Oppila specializes in building tailored embedded hardware and software solutions. Whether you require custom MIPI CSI-2 bridge boards for specialized sensors, bespoke AI model training, or unique CAN topologies, we align the architecture precisely with your operational demands.

Sectors such as dynamic material handling, collaborative assembly (cobotics), pharmaceutical packaging, and automated electronics inspection see immediate ROI through increased safety, faster throughput, and reduced defect rates by employing real-time visual interrupts.

The AI model assigns a probability score (confidence) to every object it 'sees'. The threshold is a configurable safety net; the system will only issue a motion interrupt command if the AI is, for example, 85% certain the object in its path matches the target priority profile.

The architecture supports continuous, fluid multi-axis motion sequences. The AI vision system runs asynchronously alongside the motion controller, constantly scanning the environment to interject an immediate halt command only when the predefined condition is triggered.

Yes, provided the legacy robotic arm can accept external control commands via standard industrial protocols (like CAN, RS485, or Modbus). The Jetson Orin Nano acts as a 'smart gateway', adding dynamic intelligence to otherwise rigid, pre-programmed legacy hardware.

Oppila, operating from major tech hubs in India, provides comprehensive end-to-end integration services—from designing custom carrier boards for the NVIDIA Jetson platform to developing the Linux drivers and AI software stacks required to bring your smart robotic vision to life.