Edge AI-Based Object Detection & AI-Triggered Controls with SONY Cameras

Artificial Intelligence is redefining how camera systems operate in industrial and enterprise environments. Traditional cameras capture footage. Intelligent cameras analyze, decide, and act.

In this implementation, we demonstrate how Sony B2B cameras integrated with an NVIDIA Jetson edge AI platform enable real-time object detection, tracking, and AI-triggered control mechanisms — all running at the edge with low latency.

This blog explains the complete architecture, AI processing pipeline, and intelligent control workflow powering this system.

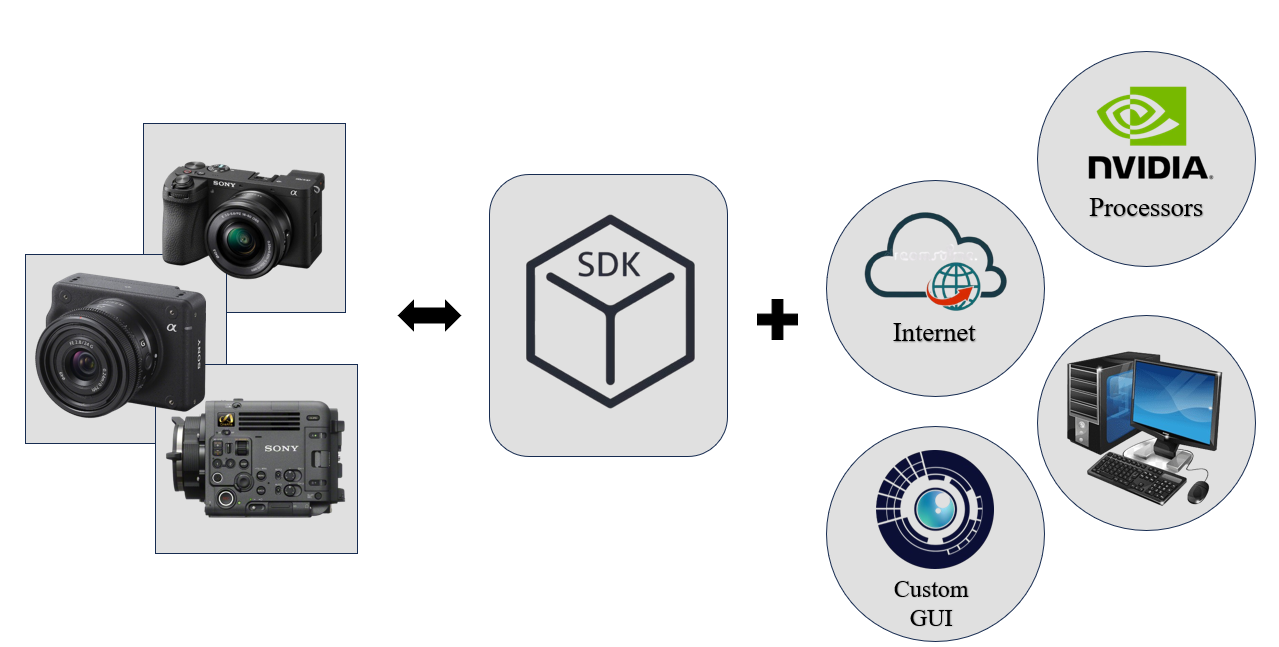

System Overview – Intelligent Vision Architecture

The system integrates the following core components:

- Sony α6700 and ILX-LR1 cameras

- Sony Remote SDK

- NVIDIA Jetson Orin platform (NX / AGX / Nano)

- Custom-built GUI

- Multi-camera connectivity (WiFi / USB)

- HDMI / DP monitoring output

What This Enables?

- Remote camera configuration

- Multi-camera synchronization

- GPU-accelerated AI inference

- Real-time object detection & tracking

- AI-triggered automation

- Scalable edge deployment

This architecture transforms high-performance Sony cameras into programmable AI-enabled vision nodes.

1. Remote SDK Integration – Full Camera Control

Using Sony’s Remote SDK, the system provides complete programmatic control over camera parameters.

Features Enabled by Remote SDK

- Remote shutter control

- Exposure & ISO adjustment

- Focus control

- Image capture automation

- SDK-based integration into custom applications

Workflow

- Camera connects via USB or WiFi.

- Remote SDK establishes communication.

- Custom GUI interfaces with SDK.

- NVIDIA platform handles AI processing.

- Results displayed locally or accessed remotely.

This provides seamless integration for robotics, industrial automation, and smart monitoring systems.

Block Diagram :

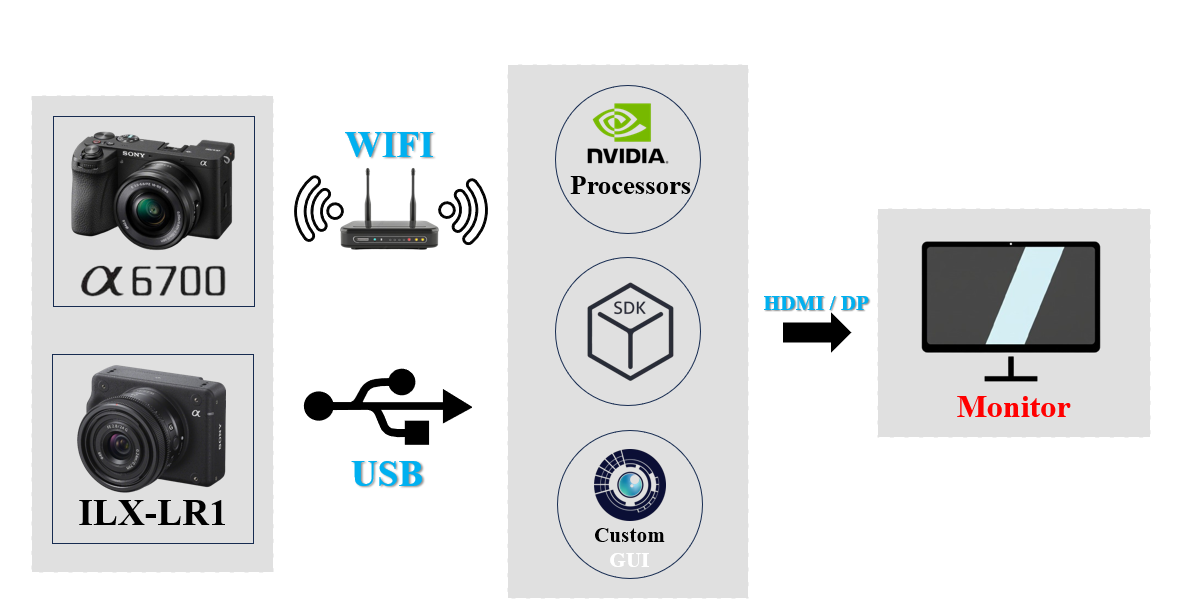

2. Multi-Camera Connection Setup

The system supports Ethernet, WiFi and USB-based camera connectivity.

Key Benefits

- Low-latency edge streaming

- Reliable wired or wireless deployment

- Real-time monitoring

- Flexible industrial configuration

The modular setup makes it suitable for mobile platforms, smart factories, or centralized monitoring systems.

Block Diagram :

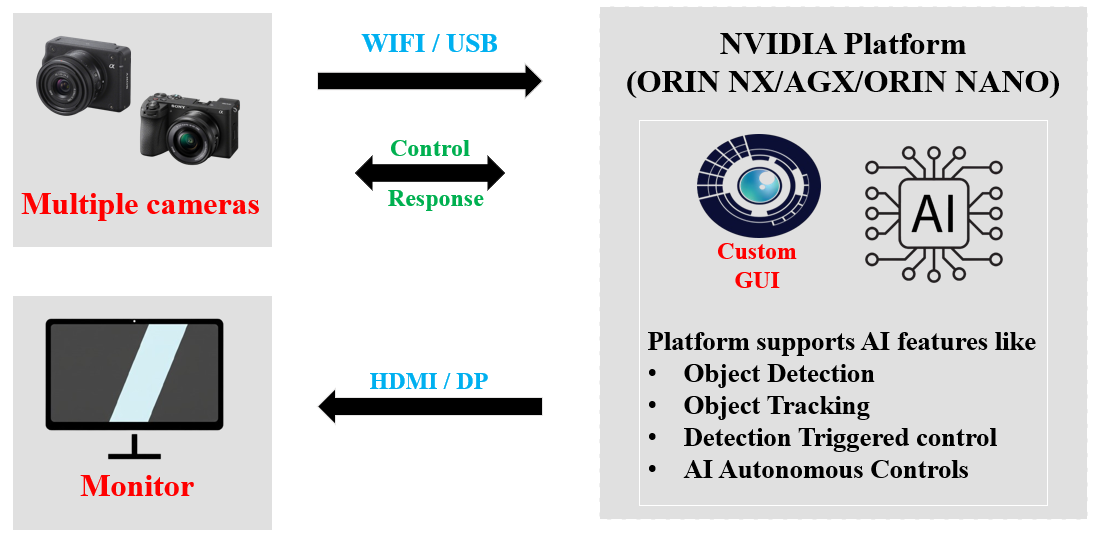

3. Image Processing on NVIDIA Jetson

The NVIDIA Jetson Orin series delivers high-performance AI processing directly at the edge.

Supported Jetson Platforms

- Jetson Orin NX

- Jetson AGX Orin

- Jetson Orin Nano

Image Processing Capabilities

- Multi-camera input handling

- Video stitching

- Panoramic feed generation

- Grid view monitoring

- Real-time AI inference

- Custom GUI visualization

Processing Pipeline

- Multiple camera streams captured.

- Frames processed by GPU.

- AI model performs object detection.

- Results rendered in GUI.

- Output displayed via HDMI/DP.

This allows scalable AI systems from dual-camera setups to complex multi-stream deployments.

Block Diagram :

Core Focus: AI-Based Object Detection & Triggered Controls

Unlike traditional monitoring systems, this solution performs real-time detection and automatically triggers actions.

AI Capabilities

- Object Detection

- Object Tracking

- Detection-Oriented Control (AI Control)

AI Control Loop Explained

The closed-loop AI system operates as follows:

- Camera captures frame.

- Frame sent to NVIDIA GPU.

- AI model performs detection.

- Detection evaluated.

- Control signal generated.

- External system responds.

- Loop continues in real time.

This enables intelligent automation without human intervention.

Real-Time AI-Triggered Actions

Depending on application requirements, the system can:

- Trigger alarms on specific object detection

- Stop machinery when unsafe objects are identified

- Auto-zoom into detected subjects

- Control robotic arms

- Activate security responses

- Initiate tracking-based adjustments

This transforms the camera from a passive sensor into an active decision-making system.

Why Edge AI with NVIDIA Jetson?

Edge processing offers significant advantages:

- Low-latency real-time inference

- No dependency on cloud connectivity

- Reduced bandwidth usage

- Secure on-premise data processing

- Multi-stream AI capability

With GPU acceleration and TensorRT optimization, the system ensures reliable performance even with high-resolution multi-camera setups.

Custom GUI – Enterprise-Ready Monitoring

The custom-built GUI acts as a centralized control interface:

- Live camera feeds

- Multi-camera grid view

- AI detection overlays

- Event logging dashboard

- Camera parameter adjustments

- Remote control interface

This provides a complete operational dashboard for industrial environments.

Industry Applications

This AI-driven vision solution is ideal for:

- Smart industrial automation

- Robotics control systems

- Intelligent surveillance

- Manufacturing quality inspection

- Perimeter security

- Traffic monitoring

- Smart agriculture

- Defense applications

Key Advantages of This AI Vision System

- Real-time object detection

- AI-triggered automated control

- Multi-camera scalability

- Edge-based NVIDIA GPU processing

- Sony Remote SDK integration

- Customizable GUI

- Low-latency decision making

Conclusion – Intelligent Vision That Acts

By integrating Sony B2B cameras with NVIDIA Jetson edge AI platforms, we have developed a scalable, intelligent vision ecosystem capable of:

- Detecting

- Tracking

- Deciding

- Acting

This is not just video monitoring. It is AI-powered automation.

The future of intelligent systems lies in cameras that not only see — but understand and respond in real time.

FAQ

Traditional cameras passively capture and record footage. An AI-enabled system using Sony B2B cameras (like the α6700 or ILX-LR1) and NVIDIA Jetson edge platforms can actively analyze the feed, detect objects, and trigger automated controls in real-time without human intervention.

The Sony Remote SDK provides programmatic access to deep camera functionalities. This allows custom GUI and edge software to remotely control shutter timing, exposure, ISO, and focus, seamlessly integrating Sony cameras into automated enterprise workflows.

The NVIDIA Jetson Orin series (Nano, NX, and AGX) delivers GPU acceleration required to process multiple high-resolution camera streams simultaneously, run AI inference at the edge, and trigger actions with low latency — all without cloud dependency.

AI-triggered controls execute when an Edge AI model detects a specific object or anomaly in the video feed and automatically sends a command to an external system — such as stopping a robotic arm, sounding an alarm, or locking a gate — based entirely on that visual analysis.

Yes. This architecture supports multi-camera connectivity via USB, Ethernet, and WiFi. The NVIDIA Jetson GPU can process parallel streams to create panoramic feeds, grid views, and synchronized multi-angle AI inference.

No. All video processing, object detection, and control intelligence run locally on the NVIDIA Jetson platform. This ensures ultra-low latency, complete data security, and uninterrupted operation even in remote or offline industrial facilities.

This architecture is demonstrated using the Sony α6700 and the ultra-compact Sony ILX-LR1 — an industrial-grade camera module ideal for robotics, drones, and compact edge AI integrations due to its lightweight, screenless design.

By running TensorRT-optimized AI models on the local Jetson GPU, the system eliminates cloud round-trip latency. This allows the system to detect a safety threat or product defect and trigger a physical hardware response in milliseconds.

With custom model training, the system can identify virtually any object: defective products on a production line, unauthorized personnel in restricted zones, specific vehicle types in traffic monitoring, or precision components in robotic bin-picking applications.

Yes. Beyond detection, the NVIDIA Jetson AI pipeline supports continuous tracking. In security or robotics use cases, the system dynamically adjusts auto-zoom, issues tracking coordinates to PTZ motors, or follows targets across multiple camera zones.

The Jetson Orin platform supports HDMI and DisplayPort outputs. The custom GUI provides an enterprise dashboard displaying live multi-camera grids, AI detection overlays, event logs, and direct camera control panels.

Absolutely. The Sony ILX-LR1 camera on drones or automated agricultural platforms, combined with the Jetson edge AI, enables real-time crop disease detection, pest identification, and localized intervention triggers — directly in the field with no internet required.

Standard motion sensors trigger on any movement — wind, animals, or vehicles. Our Edge AI system classifies detected subjects specifically as 'person' or 'vehicle', dramatically reducing false alarms and enabling intelligent, contextual security responses.

Yes. Using the Sony Remote SDK in a closed-loop with the Jetson AI, the system can automatically instruct the camera to adjust focus, exposure, or zoom when the AI detects a relevant subject — enabling intelligent adaptive imaging.

Closed-loop AI control means the system operates entirely on its own visual feedback. The camera sees → the GPU processes → the algorithm decides → a physical action is triggered. The result changes the environment, and the cycle continues — autonomously, in real time.

The Sony ILX-LR1 offers a full-frame sensor for outstanding low-light and high-resolution image quality in an ultra-minimalist form factor — no screen, no battery, no unnecessary bulk. This makes it the ideal dedicated sensor for Jetson robotics and drone integrations.

Both options are configurable. The system can record continuously, or save storage by only recording clips when a specific AI-triggered event (like a safety violation or quality defect) occurs.

Yes. The intelligent vision pipeline serves as the 'eyes' for robotic arms. The Jetson evaluates the camera feed and issues precise position or stop commands via CAN bus to robotic servo motors for picking, sorting, and motion interruption.

Because all video inferencing happens locally — right next to the camera — no sensitive video data ever leaves your facility or traverses the internet. This ensures maximum enterprise security and compliance with local data privacy standards.

Oppila provides end-to-end integration services — from system architecture and custom carrier board design to Sony Remote SDK software development and NVIDIA Jetson AI model optimization — delivering fully enterprise-ready intelligent vision systems.